Autopatching Linux Templates

If you missed the first post in my series around Windows Template patching, you can find it here:

This article will follow the same path as the previous post, and I will go through the Nutanix workflow in detail and go through the VMware workflow where it differs.

Nutanix Linux workflow

Here is a breakdown of the general workflow for Linux templates on Nutanix:

I will cover the most important steps in detail below from the different plays.

Play 1 - Start and clone

First step: Gather facts about template to patch

### Set template name based on OS version ###

- name: "Set template name for Ubuntu 22.04"

ansible.builtin.set_fact:

template_vm_name: "tmpl-ubnt-22.04-ansible"

os_family: "ubuntu"

when: linux_os_version == "Ubuntu_22.04"

- name: "Set template name for Ubuntu 24.04"

ansible.builtin.set_fact:

template_vm_name: "tmpl-ubnt-24.04-ansible"

os_family: "ubuntu"

when: linux_os_version == "Ubuntu_24.04"

- name: "Set template name for Rocky 9"

ansible.builtin.set_fact:

template_vm_name: "tmpl-rocky-9-ansible"

os_family: "rocky"

when: linux_os_version == "Rocky_9"

- name: "Set template name for Rocky 10"

ansible.builtin.set_fact:

template_vm_name: "tmpl-rocky-10-ansible"

os_family: "rocky"

when: linux_os_version == "Rocky_10"We set the template VM to update based on extra_vars. Here, if you compare to the Windows workflow, we have 4 different templates to take into account.

Second step: Prepare the cloud-init file

After we know what template to patch and what OS family we're looking at, we prepare the cloud-init files to prepare for patching.

### Prepare temporary cloud-init file ###

- name: "Nutanix: Prepare temporary cloud-init file"

ansible.builtin.tempfile:

state: file

suffix: cloud-init-{{ temp_vm_name }}.cfg

register: cloud_init_file

### Create cloud-init file for patching (does NOT remove template user) ###

- name: "Nutanix: Create Ubuntu cloud-init file for patching"

ansible.builtin.template:

src: "templates/cloud-init-patch-ubuntu.cfg"

dest: "{{ cloud_init_file.path }}"

when: os_family == "ubuntu"

- name: "Nutanix: Create Rocky cloud-init file for patching"

ansible.builtin.template:

src: "templates/cloud-init-patch-rocky.cfg"

dest: "{{ cloud_init_file.path }}"

when: os_family == "rocky"We use ansible.builtin.tempfile and template modules. The template for cloud-init-patch- has these two different suffixes, one for Ubuntu and one for Rocky, since they differ a bit based on the different OS family.

Here's the content of the different cloud-init files, starting with the Ubuntu one:

#cloud-config

hostname: {{ template_vm_name }}

chpasswd:

list: |

template:{{ vault_vmw_tmpl_admin_password }}

expire: False

write_files:

- path: /etc/netplan/50-cloud-init.yaml

content: |

network:

version: 2

ethernets:

{{ network_interface }}:

dhcp4: true

dhcp6: false

permissions: '0600'

runcmd:

- [ sh, -c, 'echo "template ALL=(ALL) NOPASSWD:ALL" > /etc/sudoers.d/template' ]

- [ sh, -c, 'chmod 0440 /etc/sudoers.d/template' ]

- [ sh, -c, 'rm -f /etc/netplan/99-network.yaml' ]

- [ sh, -c, 'truncate -s 0 /root/.bash_history' ]

- [ sh, -c, 'netplan apply' ]

Here is the Rocky Linux cloud-init:

#cloud-config

hostname: {{ template_vm_name }}

chpasswd:

list: |

template:{{ vault_vmw_tmpl_admin_password }}

expire: False

write_files:

- path: /etc/ssh/sshd_config.d/99-cloud-init.conf

content: |

PasswordAuthentication yes

PermitRootLogin no

owner: root:root

permissions: '0644'

- path: /etc/NetworkManager/system-connections/{{ network_interface }}.nmconnection

content: |

[connection]

id={{ network_interface }}

type=ethernet

interface-name={{ network_interface }}

[ethernet]

[ipv4]

method=auto

[ipv6]

addr-gen-mode=default

method=disabled

[proxy]

owner: root:root

permissions: '0600'

runcmd:

- [ sh, -c, 'echo "template ALL=(ALL) NOPASSWD:ALL" > /etc/sudoers.d/template' ]

- [ sh, -c, 'chmod 0440 /etc/sudoers.d/template' ]

- [ sh, -c, 'truncate -s 0 /root/.bash_history' ]

- [ sh, -c, 'hostnamectl set-hostname {{ template_vm_name }}' ]

- [ sh, -c, 'nmcli connection delete "cloud-init {{ network_interface }}" || true' ]

- [ sh, -c, 'nmcli connection delete "System {{ network_interface }}" || true' ]

- [ sh, -c, 'nmcli connection reload' ]

- [ sh, -c, 'nmcli connection up {{ network_interface }}' ]

- [ rm, -f, /etc/ssh/sshd_config.d/50-cloud-init.conf ]

- [ systemctl, restart, sshd ]

We prepare both with a username and a password known. We set it to DHCP, ready to connect to it using SSH.

Third step: Wait for Network connection and SSH connection to be ready.

After the files are prepared, we clone the VMs to patch and wait for SSH to become stable.

### Clone template WITH cloud-init to staging network ###

- name: "Nutanix: Clone template to temporary VM with cloud-init"

nutanix.ncp.ntnx_vms_clone:

src_vm_uuid: "{{ template_uuid }}"

vcpus: 4

cores_per_vcpu: 1

memory_gb: 24

name: "{{ temp_vm_name }}"

networks:

- is_connected: true

subnet:

uuid: "6ae87b87-be51-4612-a51e-a15fa5536807"

guest_customization:

type: "cloud_init"

script_path: "{{ cloud_init_file.path }}"

timezone: Europe/Stockholm

register: temp_vm_clone

### Monitor clone creation ###

- name: "Nutanix: Monitor clone creation status"

ansible.builtin.assert:

that:

- temp_vm_clone.response is defined

- temp_vm_clone.response.status.state == 'COMPLETE'

### Power on the VM ###

- name: "Nutanix: Power on temp VM"

nutanix.ncp.ntnx_vms:

state: power_on

vm_uuid: "{{ temp_vm_clone.vm_uuid }}"

### Wait for VM to boot and get IP via DHCP ###

- name: "Nutanix Linux: Wait for VM to boot (initial delay)"

ansible.builtin.pause:

seconds: 60

### Fetch VM IP address from Nutanix (wait for valid IP, not link-local) ###

- name: "Nutanix Linux: Get VM IP address (with retry)"

nutanix.ncp.ntnx_vms_info:

vm_uuid: "{{ temp_vm_clone.vm_uuid }}"

register: vm_info

until:

- vm_info.response.status.resources.nic_list is defined

- vm_info.response.status.resources.nic_list | length > 0

- vm_info.response.status.resources.nic_list[0].ip_endpoint_list is defined

- vm_info.response.status.resources.nic_list[0].ip_endpoint_list | length > 0

- (vm_info.response.status.resources.nic_list[0].ip_endpoint_list | map(attribute='ip') | reject('match', '^169\\.254\\..*') | list | length) > 0

retries: 15

delay: 20

### Wait for SSH to be ready ###

- name: "Nutanix: Wait for SSH port to be available"

ansible.builtin.wait_for:

host: "{{ temp_vm_ip }}"

port: 22

state: started

timeout: 600

delay: 30So here we wait for the VM to become stable and we assert that we can connect through it using SSH.

Lastly, but not least, we add the temp VM to our inventory.

### Add temp VM to in-memory inventory for patching ###

- name: "Add temp VM to inventory for Linux Updates"

ansible.builtin.add_host:

name: "{{ temp_vm_ip }}"

groups: linux_temp_patch

ansible_host: "{{ temp_vm_ip }}"

ansible_user: "template"

ansible_password: "{{ vault_vmw_tmpl_admin_password }}"

ansible_connection: ssh

ansible_ssh_common_args: '-o StrictHostKeyChecking=no -o UserKnownHostsFile=/dev/null'

ansible_become: true

ansible_become_method: sudo

ansible_become_password: "{{ vault_vmw_tmpl_admin_password }}"

os_family: "{{ os_family }}"As you can see, the workflow is pretty similar to the one used on Nutanix for Windows but fixed to work with Linux.

Play 2 - Patching

In the second play, we connect to the Temp VM and use Ansible built-in tools to patch the VM:

First step: Wait for SSH to become stable

### Wait for SSH to be truly ready ###

- name: "Linux: Wait for SSH to be stable"

ansible.builtin.wait_for_connection:

timeout: 300

delay: 5Second step: Patching

Here we need to take into account that we have two Linux families, first Ubuntu and then Rocky. The patching commands are quite different on the two.

Starting with the Ubuntu Workflow:

### Ubuntu patching block ###

- name: "Ubuntu: Update and upgrade packages"

when: os_family == "ubuntu"

block:

- name: "Ubuntu: Update apt cache"

ansible.builtin.apt:

update_cache: yes

cache_valid_time: 0

- name: "Ubuntu: Upgrade all packages"

ansible.builtin.apt:

upgrade: dist

autoremove: yes

autoclean: yes

register: apt_upgrade_result

- name: "Ubuntu: Check if reboot is required"

ansible.builtin.stat:

path: /var/run/reboot-required

register: reboot_required_file

- name: "Ubuntu: Reboot if needed"

ansible.builtin.reboot:

reboot_timeout: 600

post_reboot_delay: 30

when: reboot_required_file.stat.exists

- name: "Ubuntu: Clean up apt cache"

ansible.builtin.apt:

autoclean: yes

autoremove: yes

### Second upgrade pass to catch any remaining updates ###

- name: "Ubuntu: Second upgrade pass (catch remaining updates)"

ansible.builtin.apt:

update_cache: yes

upgrade: dist

autoremove: yes

autoclean: yes

register: apt_upgrade_second

- name: "Ubuntu: Final reboot if needed"

ansible.builtin.stat:

path: /var/run/reboot-required

register: reboot_required_final

- name: "Ubuntu: Reboot after second pass if needed"

ansible.builtin.reboot:

reboot_timeout: 600

post_reboot_delay: 30

when: reboot_required_final.stat.existsRocky:

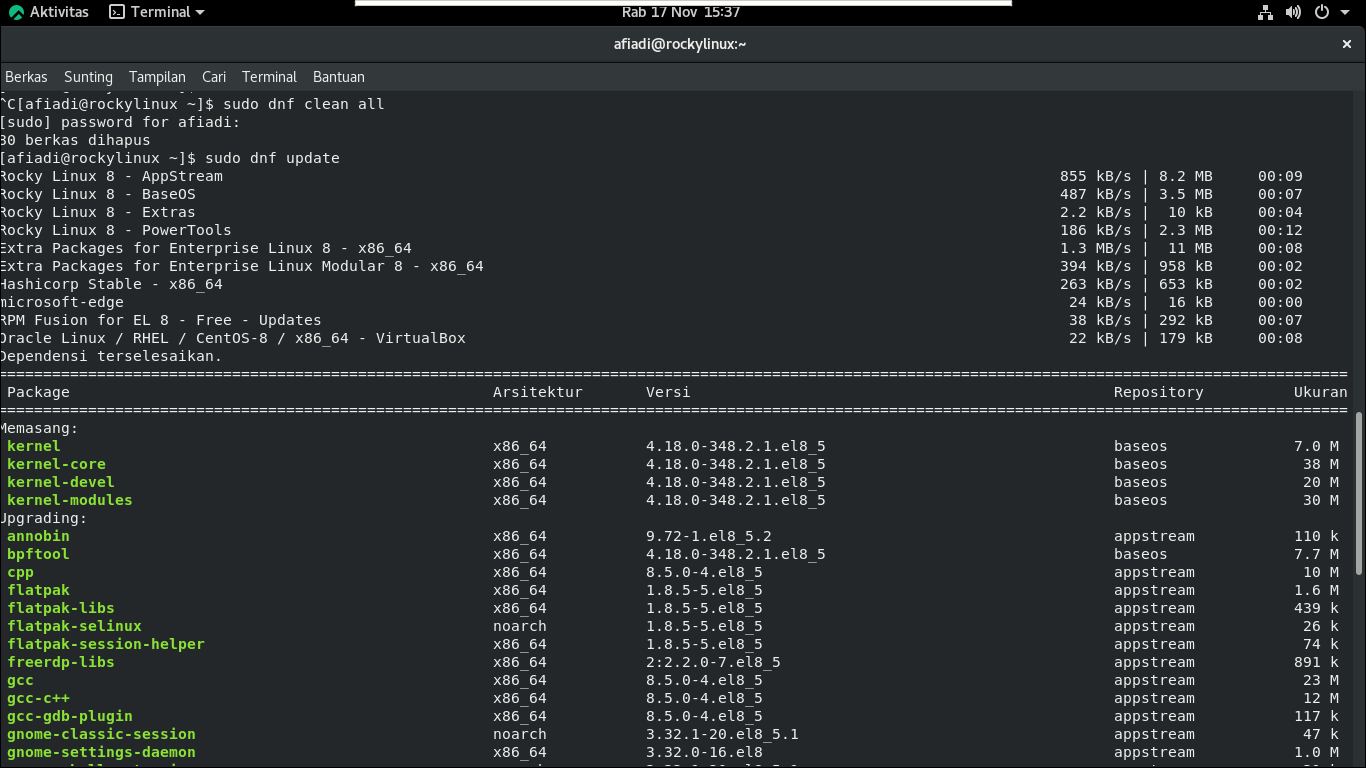

### Rocky patching block ###

- name: "Rocky: Update and upgrade packages"

when: os_family == "rocky"

block:

- name: "Rocky: Upgrade all packages"

ansible.builtin.dnf:

name: "*"

state: latest

update_cache: yes

register: dnf_upgrade_result

- name: "Rocky: Remove unused packages"

ansible.builtin.dnf:

autoremove: yes

- name: "Rocky: Check if reboot is required"

ansible.builtin.command: needs-restarting -r

register: needs_reboot

failed_when: false

changed_when: false

- name: "Rocky: Reboot if needed"

ansible.builtin.reboot:

reboot_timeout: 600

post_reboot_delay: 30

when: needs_reboot.rc != 0

- name: "Rocky: Clean up dnf cache"

ansible.builtin.command: dnf clean all

changed_when: false

### Second upgrade pass to catch any remaining updates ###

- name: "Rocky: Second upgrade pass (catch remaining updates)"

ansible.builtin.dnf:

name: "*"

state: latest

update_cache: yes

register: dnf_upgrade_second

- name: "Rocky: Remove unused packages after second pass"

ansible.builtin.dnf:

autoremove: yes

- name: "Rocky: Check if final reboot is required"

ansible.builtin.command: needs-restarting -r

register: needs_reboot_final

failed_when: false

changed_when: false

- name: "Rocky: Final reboot if needed"

ansible.builtin.reboot:

reboot_timeout: 600

post_reboot_delay: 30

when: needs_reboot_final.rc != 0As you can see here, we're using blocks with "when statements" to determine what commands to run.

Third step: Cleanup

After we're done with the patching, we clean up. Same logic here, we prepare a script and we run that script using ansible.builtin.shell to execute the cleanup script. Then we will lose the SSH connection to the VM.

The cleanup phase is also based on OS family.

Ubuntu first:

### Ubuntu template cleanup - copy script and execute ###

- name: "Ubuntu: Copy cleanup script to VM"

ansible.builtin.copy:

src: files/cleanup-ubuntu-template.sh

dest: /tmp/cleanup-template.sh

mode: '0755'

when: os_family == "ubuntu"

- name: "Ubuntu: Execute cleanup script in background"

ansible.builtin.shell: "nohup /tmp/cleanup-template.sh >/dev/null 2>&1 &"

async: 1

poll: 0

when: os_family == "ubuntu"

- name: "Ubuntu: Verify cleanup script started"

ansible.builtin.shell: "sleep 2 && test -f /var/log/gdm-template-cleanup.log"

register: cleanup_started

failed_when: false

when: os_family == "ubuntu"

- name: "Ubuntu: Display cleanup status"

ansible.builtin.debug:

msg: "{{ 'Cleanup script started successfully' if cleanup_started.rc == 0 else 'WARNING: Cleanup script may not have started' }}"

when: os_family == "ubuntu"And here we have the Ubuntu script:

#!/bin/bash

echo "=== Starting Ubuntu template cleanup ==="

# Log to file

LOG_FILE="/var/log/gdm-template-cleanup.log"

exec > >(tee -a "$LOG_FILE") 2>&1

echo "Cleanup started: $(date)"

# 1. Clean apt cache

echo "Cleaning apt cache..."

apt-get clean 2>&1

echo "Running apt-get autoremove..."

apt-get autoremove -y 2>&1

echo "Running apt-get autoclean..."

apt-get autoclean -y 2>&1

echo "Removing apt lists cache..."

rm -rfv /var/lib/apt/lists/* 2>&1

# 2. Clean temporary files

echo "Cleaning temporary files..."

rm -rfv /tmp/* 2>&1 | head -20

rm -rfv /var/tmp/* 2>&1 | head -20

echo "Temporary files cleaned."

# 3. Clean cloud-init state

echo "Cleaning cloud-init state..."

cloud-init clean --logs --machine-id --seed 2>&1

# 4. Clean machine-id

echo "Resetting machine-id..."

rm -fv /etc/machine-id 2>&1

touch /etc/machine-id && echo "Created empty /etc/machine-id"

rm -fv /var/lib/dbus/machine-id 2>&1

ln -sv /etc/machine-id /var/lib/dbus/machine-id 2>&1

# 4b. Clean systemd random seed

echo "Removing systemd random-seed..."

rm -fv /var/lib/systemd/random-seed 2>&1

# 4c. Clean udev persistent network rules

echo "Removing udev persistent network rules..."

rm -fv /etc/udev/rules.d/70-persistent-net.rules 2>&1

rm -fv /etc/udev/rules.d/75-persistent-net-generator.rules 2>&1

# 5. Clean SSH host keys

echo "Removing SSH host keys..."

rm -fv /etc/ssh/ssh_host_* 2>&1

# 5b. Clean SSH authorized_keys and .ssh directories

echo "Removing SSH authorized_keys and .ssh directories..."

rm -rfv /root/.ssh/* 2>&1

rm -fv /home/*/.ssh/authorized_keys 2>&1

rm -fv /home/*/.ssh/known_hosts 2>&1

echo "SSH keys cleaned."

# 6. Clean all logs

echo "Truncating all logs..."

find /var/log -type f -name "*.log" -exec truncate -s 0 {} \; -print 2>&1 | head -30

echo "Removing cloud-init logs..."

rm -rfv /var/log/cloud* 2>&1

echo "Removing audit logs..."

rm -rfv /var/log/audit/* 2>&1

echo "Removing wtmp/btmp..."

rm -fv /var/log/wtmp /var/log/btmp 2>&1

truncate -s 0 /var/log/lastlog 2>&1 && echo "Truncated lastlog"

# 6b. Clean systemd journal logs

echo "Cleaning systemd journal..."

journalctl --vacuum-time=1s 2>&1 || echo "journalctl vacuum skipped"

rm -rfv /var/log/journal/* 2>&1 | head -10

rm -rfv /run/log/journal/* 2>&1 | head -10

echo "Journal cleaned."

# 7. Clean bash history

echo "Cleaning bash history..."

truncate -s 0 /root/.bash_history && echo "Truncated root bash_history"

truncate -s 0 /home/template/.bash_history 2>&1 && echo "Truncated template bash_history"

history -c && echo "Cleared current session history"

# 8. Clean cron and at jobs

echo "Cleaning cron and at jobs..."

rm -rfv /var/spool/cron/* 2>&1

rm -rfv /var/spool/at/* 2>&1

# 9. Remove template sudoers file (NOPASSWD not needed in template)

echo "Removing template sudoers file..."

rm -fv /etc/sudoers.d/template 2>&1 && echo "Template sudoers file removed"

# 10. Clean all netplan files and disable wait-online service

echo "Removing all netplan yaml files..."

rm -fv /etc/netplan/*.yaml 2>&1

echo "Disabling systemd-networkd-wait-online.service to prevent boot delays..."

systemctl disable systemd-networkd-wait-online.service 2>&1 || echo "Service already disabled or not found"

# 11. Clean DHCP leases

echo "Cleaning DHCP leases..."

rm -fv /var/lib/dhcp/* 2>&1

rm -fv /var/lib/dhclient/* 2>&1

# 12. Final log entry

echo "All cleanup completed: $(date)"

echo "Shutting down in 5 seconds..."

# 12. Poweroff

sleep 5 2>&1

poweroff 2>&1

And then we have the logic to clean up the Rocky Template to be ready for cloning:

### Rocky template cleanup - copy script and execute ###

- name: "Rocky: Copy cleanup script to VM"

ansible.builtin.copy:

src: files/cleanup-rocky-template.sh

dest: /tmp/cleanup-template.sh

mode: '0755'

when: os_family == "rocky"

- name: "Rocky: Execute cleanup script in background"

ansible.builtin.shell: "nohup /tmp/cleanup-template.sh >/dev/null 2>&1 &"

async: 1

poll: 0

when: os_family == "rocky"

- name: "Rocky: Verify cleanup script started"

ansible.builtin.shell: "sleep 2 && test -f /var/log/gdm-template-cleanup.log"

register: cleanup_started

failed_when: false

when: os_family == "rocky"

- name: "Rocky: Display cleanup status"

ansible.builtin.debug:

msg: "{{ 'Cleanup script started successfully' if cleanup_started.rc == 0 else 'WARNING: Cleanup script may not have started' }}"

when: os_family == "rocky"Rocky cleanup script:

#!/bin/bash

echo "=== Starting Rocky template cleanup ==="

# Log to file

LOG_FILE="/var/log/gdm-template-cleanup.log"

exec > >(tee -a "$LOG_FILE") 2>&1

echo "Cleanup started: $(date)"

# 1. Clean dnf cache

echo "Cleaning dnf cache..."

dnf clean all 2>&1

echo "Removing dnf/yum cache directories..."

rm -rfv /var/cache/dnf/* 2>&1 | head -20

rm -rfv /var/cache/yum/* 2>&1 | head -20

# 2. Clean temporary files

echo "Cleaning temporary files..."

rm -rfv /tmp/* 2>&1 | head -20

rm -rfv /var/tmp/* 2>&1 | head -20

echo "Temporary files cleaned."

# 3. Clean cloud-init state

echo "Cleaning cloud-init state..."

cloud-init clean --logs --machine-id --seed 2>&1

# 4. Clean machine-id

echo "Resetting machine-id..."

rm -fv /etc/machine-id 2>&1

touch /etc/machine-id && echo "Created empty /etc/machine-id"

rm -fv /var/lib/dbus/machine-id 2>&1

ln -sv /etc/machine-id /var/lib/dbus/machine-id 2>&1

# 4b. Clean systemd random seed

echo "Removing systemd random-seed..."

rm -fv /var/lib/systemd/random-seed 2>&1

# 4c. Clean udev persistent network rules

echo "Removing udev persistent network rules..."

rm -fv /etc/udev/rules.d/70-persistent-net.rules 2>&1

rm -fv /etc/udev/rules.d/75-persistent-net-generator.rules 2>&1

# 5. Clean SSH host keys

echo "Removing SSH host keys..."

rm -fv /etc/ssh/ssh_host_* 2>&1

# 5b. Clean SSH authorized_keys and .ssh directories

echo "Removing SSH authorized_keys and .ssh directories..."

rm -rfv /root/.ssh/* 2>&1

rm -fv /home/*/.ssh/authorized_keys 2>&1

rm -fv /home/*/.ssh/known_hosts 2>&1

echo "SSH keys cleaned."

# 6. Clean all logs

echo "Truncating all logs..."

find /var/log -type f -name "*.log" -exec truncate -s 0 {} \; -print 2>&1 | head -30

echo "Removing cloud-init logs..."

rm -rfv /var/log/cloud* 2>&1

echo "Removing audit logs..."

rm -rfv /var/log/audit/* 2>&1

echo "Removing wtmp/btmp..."

rm -fv /var/log/wtmp /var/log/btmp 2>&1

truncate -s 0 /var/log/lastlog 2>&1 && echo "Truncated lastlog"

# 6b. Clean systemd journal logs

echo "Cleaning systemd journal..."

journalctl --vacuum-time=1s 2>&1 || echo "journalctl vacuum skipped"

rm -rfv /var/log/journal/* 2>&1 | head -10

rm -rfv /run/log/journal/* 2>&1 | head -10

echo "Journal cleaned."

# 7. Clean bash history

echo "Cleaning bash history..."

truncate -s 0 /root/.bash_history && echo "Truncated root bash_history"

truncate -s 0 /home/template/.bash_history 2>&1 && echo "Truncated template bash_history"

history -c && echo "Cleared current session history"

# 8. Clean cron and at jobs

echo "Cleaning cron and at jobs..."

rm -rfv /var/spool/cron/* 2>&1

rm -rfv /var/spool/at/* 2>&1

# 9. Remove template sudoers file (NOPASSWD not needed in template)

echo "Removing template sudoers file..."

rm -fv /etc/sudoers.d/template 2>&1 && echo "Template sudoers file removed"

# 10. Clean NetworkManager connections and disable wait-online service

echo "Cleaning NetworkManager connections..."

rm -rfv /etc/NetworkManager/system-connections/* 2>&1

echo "Disabling NetworkManager-wait-online.service to prevent boot delays..."

systemctl disable NetworkManager-wait-online.service 2>&1 || echo "Service already disabled or not found"

# 11. Clean DHCP leases

echo "Cleaning DHCP leases..."

rm -fv /var/lib/dhcp/* 2>&1

rm -fv /var/lib/dhclient/* 2>&1

# 12. Final log entry

echo "All cleanup completed: $(date)"

echo "Shutting down in 5 seconds..."

# 12. Poweroff

sleep 5

poweroff

Play 3 - Delete old templates

Since we cloned the old template for patching, we need to make sure everything was smooth and then we need to replace the old template. This is what's done in the third Play.

First, we assert that the clean up script is done and has shut down the VM:

### Wait for VM to be powered off after shutdown ###

- name: "Nutanix: Wait for temp VM to be powered off"

nutanix.ncp.ntnx_vms_info:

vm_uuid: "{{ temp_vm_clone.vm_uuid }}"

register: power_state

retries: 40

delay: 30

until: power_state.response.status.resources.power_state == "OFF"Same here, for some reason Nutanix now leaves the CD-ROM attached to the VM. I do not know why? But same behaviour as Windows workflow. Let's delete that so we do not have that problem later on:

### Remove CD-ROM with cloud-init.cfg before making template ###

- name: "Nutanix: Get current VM configuration with CD-ROM info"

nutanix.ncp.ntnx_vms_info:

vm_uuid: "{{ temp_vm_clone.vm_uuid }}"

register: vm_config

- name: "Nutanix: Extract CD-ROM list from disk list"

ansible.builtin.set_fact:

cdrom_uuids: "{{ vm_config.response.status.resources.disk_list | selectattr('device_properties.device_type', 'equalto', 'CDROM') | map(attribute='uuid') | list }}"

- name: "Nutanix: Remove ALL CD-ROMs from VM (unmount cloud-init.cfg and any other ISOs)"

nutanix.ncp.ntnx_vms:

state: present

vm_uuid: "{{ temp_vm_clone.vm_uuid }}"

disks:

- state: absent

uuid: "{{ item }}"

loop: "{{ cdrom_uuids }}"

when: cdrom_uuids | length > 0Then we change the name of the temp VM to take over the name of the old unpatched template:

### Get old template info BEFORE renaming (needed for CI number extraction) ###

- name: "Nutanix: Get old template info"

nutanix.ncp.ntnx_vms_info:

filter:

vm_name: "{{ template_vm_name }}"

kind: vm

register: old_template_info

### Rename old template ###

- name: "Nutanix: Rename old template with timestamp"

nutanix.ncp.ntnx_vms:

state: present

vm_uuid: "{{ old_template_info.response.entities[0].metadata.uuid }}"

name: "{{ template_vm_name }}-old-{{ playbook_timestamp }}"

desc: "Old template - replaced {{ playbook_date }}"

### Rename temp VM to template name ###

- name: "Nutanix: Rename patched VM to template name"

nutanix.ncp.ntnx_vms:

state: present

vm_uuid: "{{ temp_vm_clone.vm_uuid }}"

name: "{{ template_vm_name }}"

desc: "Patched template - {{ playbook_date }}"And if all those steps are successful, we delete the old template:

### Delete old template after successful update ###

- name: "Nutanix: Delete old template (now replaced)"

nutanix.ncp.ntnx_vms:

state: absent

vm_uuid: "{{ old_template_info.response.entities[0].metadata.uuid }}"

when:

- old_template_info.response.entities is defined

- old_template_info.response.entities | length > 0And we're done with the Linux Workflow on Nutanix. As you can see, it's almost identical to the workflow on Windows Nutanix patching workflow.

VMware Linux workflow

There is also a workflow for Linux on VMware. I will not go through the VMware workflow in detail, but I will go through the steps that differ from the Nutanix Playbook.

First, here is the general workflow for VMware Linux. As you can see, it's almost identical.

Play 1 - Start and clone

Here the workflow is almost 100% identical to the Nutanix Linux workflow. But here we use VMware specific modules for it to work:

### Prepare temporary cloud-init file ###

- name: "VMware: Prepare temporary cloud-init file"

ansible.builtin.tempfile:

state: file

suffix: cloud-init-patch-{{ temp_vm_name }}.cfg

register: cloud_init_file

### Create cloud-init file for patching ###

- name: "VMware: Create Ubuntu cloud-init file for patching"

ansible.builtin.template:

src: "templates/cloud-init-patch-ubuntu.cfg"

dest: "{{ cloud_init_file.path }}"

when: os_family == "ubuntu"

- name: "VMware: Create Rocky cloud-init file for patching"

ansible.builtin.template:

src: "templates/cloud-init-patch-rocky.cfg"

dest: "{{ cloud_init_file.path }}"

when: os_family == "rocky"

### Clone template ###

- name: "VMware: Clone template to temporary VM"

vmware.vmware_rest.vcenter_vm:

placement:

folder: "{{ vmw_templates_folder }}"

cluster: "{{ vmw_cluster_info }}"

name: "{{ temp_vm_name }}"

source: "{{ template_id }}"

state: clone

power_on: false

register: vmw_clone_result

### Change NIC to staging network ###

- name: "VMware: Change portgroup to staging network"

vmware.vmware_rest.vcenter_vm_hardware_ethernet:

vm: "{{ vmw_clone_result.id }}"

nic: "{{ new_vmw_nic.value[0].nic }}"

backing:

type: DISTRIBUTED_PORTGROUP

network: "{{ vmw_staging_portgroup_id }}"

start_connected: true

### Customize VM with cloud-init ###

- name: "VMware: Customize the VM with cloud-init for patching"

vmware.vmware_rest.vcenter_vm_guest_customization:

vm: "{{ vmw_clone_result.id }}"

global_DNS_settings:

dns_suffix_list: []

dns_servers: []

interfaces: []

configuration_spec:

cloud_config:

type: CLOUDINIT

cloudinit:

metadata: |

instance-id: "{{ temp_vm_name }}-instance"

hostname: "{{ template_vm_name }}"

local-hostname: "{{ template_vm_name }}"

userdata: "{{ lookup('file', cloud_init_file.path) }}"We're using openvm-tools, so update for VMware tools is handled by Play 2 patching.

Play 2 - Patching

Play 2 in the workflow is exactly the same as the Nutanix workflow, cleanup—everything is the same. Easy peasy :)

Play 3 - Cleanup of old template etc

Here the general workflow is the same as the Nutanix one, but we use VMware specific ansible-modules:

### Wait for VM to be powered off ###

- name: "VMware: Wait for temp VM to be powered off"

vmware.vmware_rest.vcenter_vm_power_info:

vm: "{{ temp_vm_clone_id }}"

register: power_state

retries: 40

delay: 15

until: power_state.value.state == "POWERED_OFF"

### Get old template UUID for rename ###

- name: "VMware: Get old template UUID"

community.vmware.vmware_guest_info:

datacenter: "GDM"

name: "{{ template_vm_name }}"

register: old_template_facts

### Rename old template with timestamp ###

- name: "VMware: Rename old template with timestamp"

community.vmware.vmware_guest:

datacenter: "GDM"

cluster: "{{ vmware_cluster_name }}"

uuid: "{{ old_template_facts.instance.hw_product_uuid }}"

name: "{{ template_vm_name }}-old-{{ playbook_timestamp }}"

### Get patched VM UUID for rename ###

- name: "VMware: Get patched VM UUID"

community.vmware.vmware_guest_info:

datacenter: "GDM"

name: "{{ temp_vm_name }}"

register: patched_vm_facts

### Rename patched VM to template name ###

- name: "VMware: Rename patched VM to template name"

community.vmware.vmware_guest:

datacenter: "GDM"

cluster: "{{ vmware_cluster_name }}"

uuid: "{{ patched_vm_facts.instance.hw_product_uuid }}"

name: "{{ template_vm_name }}"

### Delete old template after successful rename ###

- name: "VMware: Delete old template (now replaced)"

community.vmware.vmware_guest:

datacenter: "GDM"

cluster: "{{ vmware_cluster_name }}"

uuid: "{{ old_template_facts.instance.hw_product_uuid }}"

state: absent

force: trueSummary

So there it is. Automated patching of Ubuntu and Rocky templates following the same logic as the Windows Automation.